FAIR Data Principles Drive Better Scientific R&D

Table of contents

The FAIR guiding principles were first published in the journal Scientific Data in 2016 by diverse stakeholders from academia, biopharma, financing, and publishing [1]. They establish data-management standards that aim to let both humans and computers alike easily find and (re)use data, no matter the volume, complexity, or origin of that data.

History of FAIR Data

The ultimate goal is to help deliver maximum value from both publicly-available and proprietary data in the near and long term. FAIR data standards have become increasingly important in modern scientific R&D, where researchers must increasingly collaborate across disciplines, locations, and time zones, and use machines to handle growing data volumes, diverse data types, and disconnected data sources.

Definition of FAIR Data

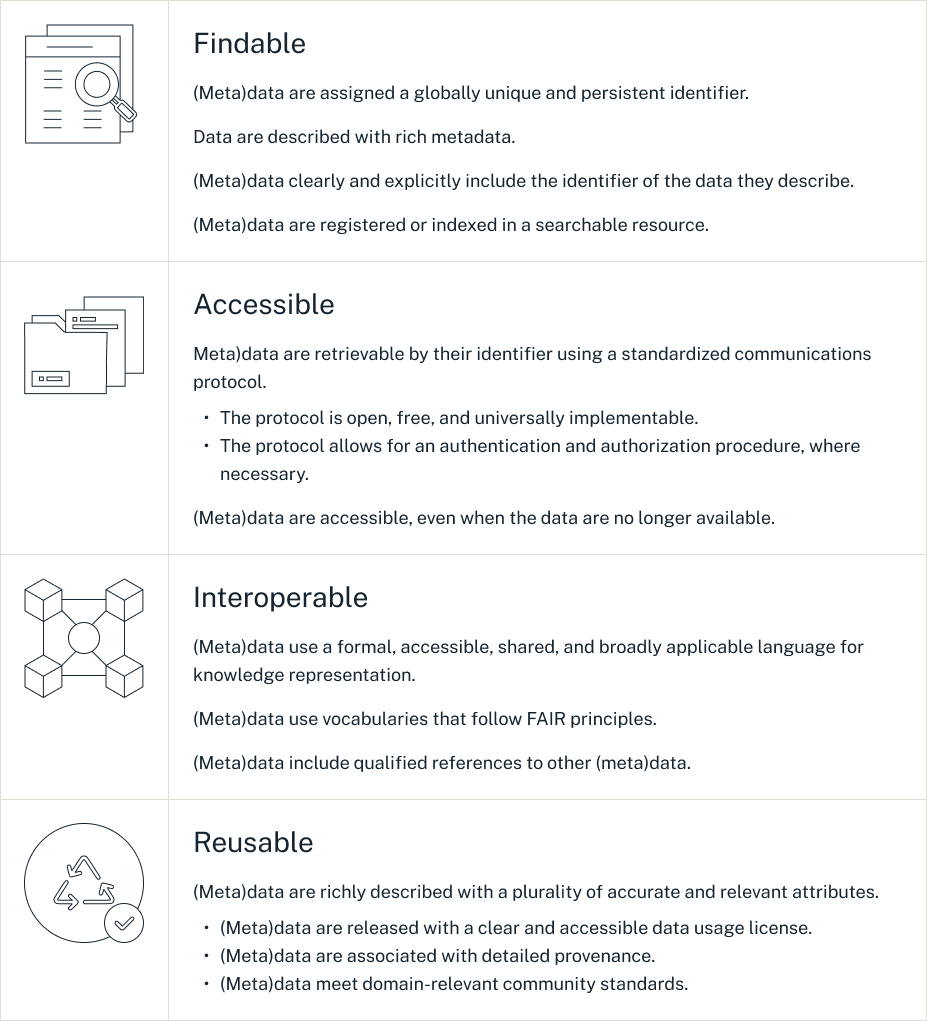

The FAIR Data Principles clarify steps that should be taken within four key areas to ensure all data and metadata are Findable, Accessible, Interoperable, and Reusable. Here are the principles:

Source: Go Fair

What FAIR Is NOT

The FAIR guiding principles are:

The first

NOT domain-specific, but rather are high-level, generally applicable, and individually executable.

The second

NOT required to be adopted globally and all at once, but rather can be gradually adopted for specific use cases and datasets, or even applied retroactively to existing datasets.

The third

NOT by default free or openly accessible, but rather they can be if specified as such.

Why Is FAIR Data Important?

Key principles of data management achieved via FAIR implementation can have positive effects on many aspects of R&D, from efficiency to collaboration to costs.

Machine Readiness of FAIR Data

While machines offer incredible benefits in terms of the speed and scale at which they can process data, they lack the judgment, intuition, and contextual understanding that humans bring to the table. Making data FAIR is in essence making them more accessible to machines and humans alike.

FAIR data requirements help ensure that data assets include all supplemental details needed for machines to identify, qualify, and use data, even if they have never been encountered before. Metadata collected can vary and is generally informed by an organization's business rules. Oftentimes, organizations build protocols to require the collection of specific metadata, such as author, date, process and methodology details, statistical data, license requirements, and authorization and access specifications. As such, it’s important to choose a software solution that accommodates this process.

Process Efficiency and Collaboration Benefits of FAIR Data Management

Implementing the FAIR guiding principles for scientific data management can help improve research efficiency and collaboration, potentially helping bring solutions to market faster. For example, implementing FAIR can:

Reduce the need for time-consuming and error-prone manual data handling (e.g., manual collection, pre-processing, cleaning, mapping, transferring, etc) by establishing less error-prone and more reproducible automated processes that help move data through the various tools and applications used in the R&D process.

Lessen the chances of redundant research efforts by improving data sharing and insight into the work of colleagues, academics, CROs, and collaborators.

Help ensure data and research records persists even after staff turnover.

Innovation Benefits of FAIR Data (including AI and ML)

The ultimate goal of FAIR is increased innovation, not just better processes. FAIR can help spur innovation by making it easier for researchers to build upon knowledge gleaned in the past. Additionally, data that are clean, labeled, and machine-ready are best suited for advanced analytics like artificial intelligence (AI) and machine learning (ML).

The original FAIR publication clarified this point, saying, “Good data management is not a goal in itself, but rather is the key conduit leading to knowledge discovery and innovation, and to subsequent data and knowledge integration and reuse by the community after the data publication process.”[1]

Cost Benefits of FAIR Data

The streamlined processes, better collaboration, and faster innovation realized through FAIR data implementation can ultimately lead to cost savings. An analysis by PricewaterhouseCoopers on behalf of the European Commission estimated that a lack of FAIR research data costs the European economy at least €10.2bn annually.[4] Factors influencing the loss include time inefficiencies, storage and licensing costs, research duplication and retractions, and impeded innovation.

Regulatory Benefits of FAIR Data

Many companies implementing FAIR principles also implement data-quality assessment metrics at the same time, a combination approach sometimes referred to as FAIR + Q. This unified strategy helps best position companies to meet rigorous regulatory review, where data provenance and quality are also of utmost importance, not just data management and machine-readiness.[5]

In the pharmaceutical industry, the gold standard for data integrity is thought to be ALCOA+, which stands for Attributable, Legible and intelligible, Contemporaneous, Original, Accurate, + (complete, consistent, enduring, available).[6]

Who Is Using FAIR Data?

FAIR principles for scientific data have received strong support from global organizations like G7, national governments, science funding agencies including the European Commission and National Institutes of Health, and pharmaceutical leaders including Novartis, Pfizer, and GSK.[7] In fact, government legislation requiring data accessibility has passed in both the United States (Open, Public, Electronic and Necessary Government Data Act) and Europe (EU Data Governance Act).[8,9]

FAIR Data in Academic Research

Academia has long been a leader in enhancing innovation via collaboration, data sharing, and iterative innovation. Its critical role in establishing and evangelizing FAIR data sharing principles is thus no surprise. Additionally, the importance of FAIR has become even more significant to academics as government agencies increasingly require data openness and accessibility for funding eligibility.

FAIR Data in Biology Research

Many biology researchers were early supporters of FAIR data management. For example, the Pistoia Alliance—a not-for-profit collaboration of life sciences companies, pharmaceutical leaders, vendors, publishers, and academic groups—publicized their support in a 2019 Drug Discovery Today feature article, where they said:

“Biopharmaceutical industry R&D, and indeed other life sciences R&D such as biomedical, environmental, agricultural and food production, is becoming increasingly data-driven and can significantly improve its efficiency and effectiveness by implementing the FAIR (findable, accessible, interoperable, reusable) guiding principles for scientific data management and stewardship. By so doing, the plethora of new and powerful analytical tools such as artificial intelligence and machine learning will be able, automatically and at scale, to access the data from which they learn, and on which they thrive. FAIR is a fundamental enabler for digital transformation.”[7]

The bioinformatics and crystallography data used in biology research are shared widely in open repositories. Researchers likely often encounter FAIR data when using resources such as the PDB, UniProt, or GenBank. But beyond these standardized and often open datatypes, many life science organizations are also outfitting their labs with research tools that support FAIR data principles from the earliest days of data collection through analysis and reporting.

Researchers may have tools like the Dotmatics electronic lab notebook (ELN) that can help ensure proper collection and management of lab data, even when the data types and research workflows used evolve. Those ELNs should let researchers push raw data collected in the lab data directly into analytics software without needing to waste time or risk error by manually preparing and transferring data.

For example, a researcher might want to pass assay data, and all associated metadata, for curve calculation, and then tie the results back into the Dotmatics ELN record file. The results should become part of a federated master data source so they will be easily searchable and re-usable in the future by colleagues with appropriate access permissions.

FAIR Data in Chemistry Research

Although chemistry research has not inherently reflected a FAIR culture, efforts to evolve have been ongoing. In 2019, the Chemistry Implementation Network (ChIN) published a manifesto calling for the industry to “Go FAIR”[10].

Other leading chemistry organizations, including the Research Data Alliance (CRDIG) and International Union of Pure and Applied Chemistry (IUPAC), have joined the cause, calling for the establishment of chemistry standards (e.g., naming conventions, structural representations, and characterization and reaction data), as well as the widespread adoption of R&D tools and infrastructure that aid in FAIR data collection, sharing, and analysis.[11]

Industry-wide support is growing. For example, there has been a call to make it easier for researchers to share chemical structure information in journal submissions.[12] Awards have been established to recognize the best chemistry FAIR datasets published each year.[13] And companies like Dotmatics are creating solutions that make it easier for chemists to annotate, track, and manage data throughout their chemistry workflows.

While change will be gradual, most experts agree, “The Chemistry community needs to create a FAIR culture which is supported by standards and infrastructure development promoting machine readability of chemical data and other digital resources.[10]

FAIR Data in Chemicals and Materials R&D

Calls to “Go FAIR” have also been increasing in the chemical and materials industry, which has traditionally focused on experimental exploration and computational modeling, rather than any data-driven approach. In fact, a data-driven approach to chemicals and materials R&D has often been deemed too difficult to achieve because the complex workflows and data types used are thought to make process documentation and data exchange uniquely challenging.

That mindset is changing as companies like Dotmatics work to create a united platform for chemicals and materials R&D. In an April 2022 Nature perspective, leading materials experts argue that a fundamental paradigm shift toward data-driven materials R&D is necessary for the industry to thrive.[14] They propose that such change is essential to reaping value from a “gold mine” of available research data that has largely remained unleveraged, despite the potential it holds for use in advanced analytics and AI.

These experts support the adoption of FAIR data principles for materials R&D and explain that there is a great need for supportive data infrastructures and research tools, like ELNs or LIMS, that will help facilitate a shift toward data-driven materials R&D. The authors warn that scientists must get on board, saying, “The predicted changes brought about by a FAIR data infrastructure will not replace scientists, but scientists who use such an infrastructure may replace those who don’t.”

Barriers to Implementing FAIR Data

Organizations looking to “Go FAIR” can help prepare for success by educating themselves about potential barriers they may face, including:

User Adoption and Change Management Challenges

A shift from a “my data” to “our data” mindset is essential for FAIR to work. Researchers need to be willing to get training and adjust their workflows to accommodate FAIR data principle guidelines. Leadership needs to be willing to make the investment in change and show how the initial extra effort will be worth it in the long run. Choosing technology solutions that are intuitive and flexible will help increase user-level adoption.

Data Diversity and Volume

The variety and volume of both structured and unstructured data types used in R&D means companies must choose supportive technology that will be able to accommodate diverse data types from the past, present, and future. In some cases, work must be done to retroactively make existing datasets FAIR. Therefore, infrastructure and software that can handle diverse data types are a must.

Ontology and Naming Issues

When industry-standard naming conventions don’t exist or are insufficient, the onus to establish consistency may fall upon research teams. Lack of consistency will mean lessened benefits from FAIR implementation as searching and analytics become muddied. Finding a solution provider with a flexible solution and expertise in this matter will be key.

Financial and Time Commitment

Implementing FAIR may take considerable time and expense. From technology adoption to process adjustments to training, the change can seem daunting. But FAIR principles can be rolled out strategically and gradually, helping both demonstrate ROI and gain buy-in along the way. A solution provider with industry expertise can help identify specific cases where FAIR implementation will reap the most reward.

Ready to Go FAIR?

Contact Dotmatics today to learn how we can help your research teams go FAIR.

References

Wilkinson, M., Dumontier, M., Aalbersberg, I. et al. The FAIR Guiding Principles for scientific data management and stewardship. Sci Data, 2016, 3.https://doi.org/10.1038/sdata.2016.18

FAIR Principles.https://www.go-fair.org/fair-principles/ (accessed October 21, 2022).

Barker, M., Chue Hong, N.P., Katz, D.S. et al. Introducing the FAIR Principles for research software. Sci Data,2022, 9. https://doi.org/10.1038/s41597-022-01710-x

Cost-benefit analysis for FAIR research data. Publications Office of the European Union. PwC EU Services. 2018.https://op.europa.eu/en/publication-detail/-/publication/d375368c-1a0a-11e9-8d04-01aa75ed71a1/language-en (accessed October 26, 2022).

Harrow, I., Balakrishnan, R., McGinty, HK., et al. Maximizing data value for biopharma through FAIR and quality implementation: FAIR plus Q. Drug Discovery Today, 2022, 27 (5).https://doi.org/10.1016/j.drudis.2022.01.006. (accessed October 25, 2022).

ALCOA+ - what does it mean? ECA Academy. https://www.gmp-compliance.org/gmp-news/alcoa-what-does-it-mean (accessed November 7, 2022).

Wise, J., Grebe de Barron, A., Splendiani, A., et al. Implementation and relevance of FAIR data principles in biopharmaceutical R&D. Drug Discovery Today, 2019.24 (94).https://doi.org/10.1016/j.drudis.2019.01.008

S.760 - Open, Public, Electronic, and Necessary Government Data Act 115th Congress (2017-2018). Congress.gov.https://www.congress.gov/bill/115th-congress/senate-bill/760?q=%7B%22search%22%3A%5B%22Open+Government+Data+Act%22%5D%7D&s=1&r=1 (accessed October 25, 2022).

Lomas, N. EU lawmakers agree data reuse rules to foster AI and R&D. Tech Crunch. 2021. https://techcrunch.com/2021/12/01/data-governance-act-provisional-agreement/(Accessed October 25, 2022).

Manifesto of the Chemistry GO FAIR Implementation Network. Chemistry - Go FAIR. https://www.go-fair.org/wp-content/uploads/2019/02/ChIN-Manifesto-final-20180905.pdf(Accessed October 25, 2022).

Coles, SJ., Frey, JG., Willighagen, EL., Chalk, SJ. Taking FAIR on the ChIN: The Chemistry Implementation Network. Data Intelligence. 2020,2 (1-2). https://doi.org/10.1162/dint_a_00035 (accessed October 25, 2022).

Schymanski, E.L., Bolton, E.E. FAIR chemical structures in the Journal of Cheminformatics. J Cheminform.2021. 13 (50).https://doi.org/10.1186/s13321-021-00520-4

Neumann, S. FAIR4Chem Award 2022: And the winner is … Chemistry! 2022.https://www.nfdi4chem.de/index.php/2022/03/30/fair4chem-award-2022-and-the-winner-is-chemistry/ (accessed October 26, 2022)

Scheffler, M., Aeschlimann, M., Albrecht, M. et al. FAIR data enabling new horizons for materials research. Nature. 2022. 604.https://doi.org/10.1038/s41586-022-04501-x

Ready to transform to data-driven decision making?

Get in touch so we can provide you with a demo based on your specific needs.