Ask any R&D scientist what happens after they finish an experiment, and you'll hear a version of the same story. The data exists. It's in the ELN, or in an attachment, or somewhere on the instrument computer. But getting it into a format that's actually usable, comparable, or shareable? That's a different project entirely.

ELN experimentation has, for years, involved some form of arts and crafts — things pasted together, connected through links, context added after the fact. Adding meaningful structure to how experiments were actually run is genuinely hard. And most labs have quietly accepted that as the cost of doing science.

Luma was built to change that. Here's how.

The attachment problem nobody talks about

Here's a scenario most bench scientists will recognize. You've run an experiment. Results come back. They land in your ELN as an attachment, which is fine for the moment — you can look at it, review it, sign it off. But two weeks later, when someone asks how those results compare to the last three runs? The attachment is useless. It's a static image. You can't interact with it, filter it, or stack it against anything else.

The real problem isn't that the data is bad. It's that it's stuck.

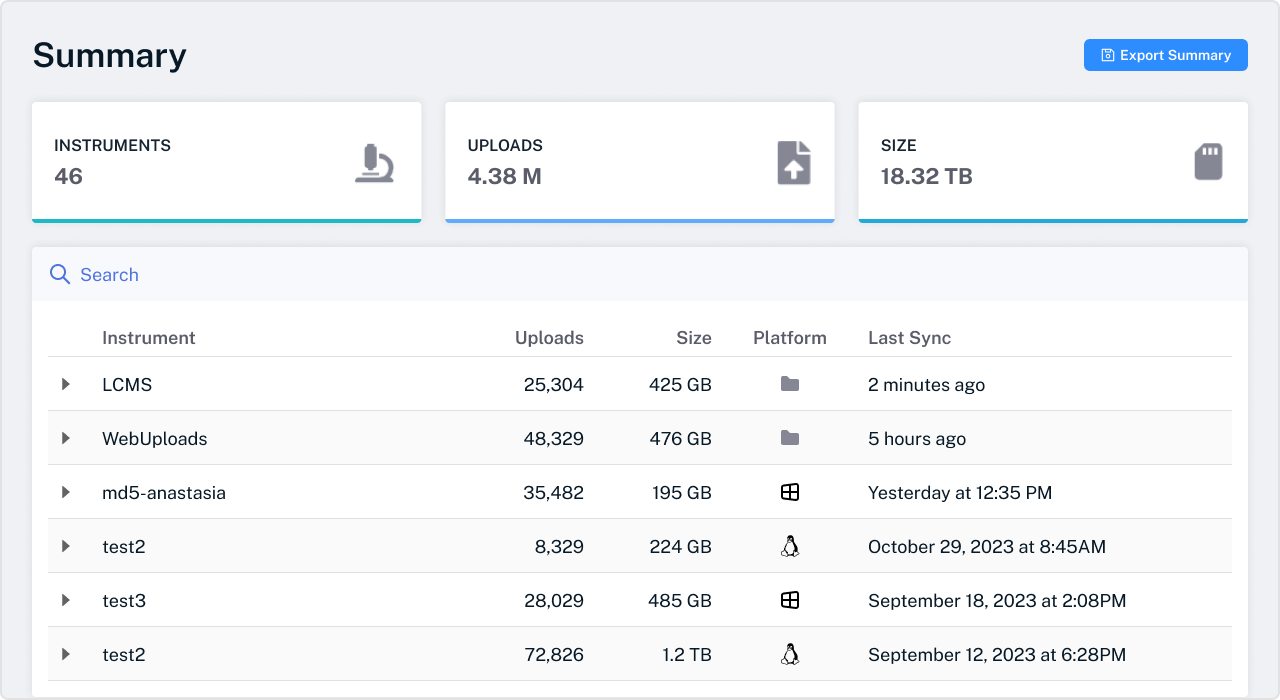

Luma connects instrument data directly to the ELN and surfaces it as a live, interactive dashboard — not a file. Take x-ray diffraction data from two competing instrument vendors with completely different data backends: Luma harmonizes both into a single overlay dashboard, shared directly inside the notebook experiment in real time.

Not exported. Not reformatted. Not manually merged by someone in a spreadsheet on a Friday afternoon.

The dashboard is interactive. You can filter it, add calculations, change the view, and bookmark your preferred layout. And because it's linked to the source experiment by ID, there's a full audit trail from result back to notebook entry. For labs running multi-vendor instrument environments, that harmonization is the difference between data you can compare and data you can only look at in isolation.

One click from Prism to a structured dashboard

For chemistry teams, the Prism workflow is one of the most immediately practical things Luma changes.

Most labs using GraphPad Prism are doing some version of the same manual process: export data from the notebook, get it into Prism, run curve fits, then figure out how to get those results back somewhere useful. It works, but it's slow, error-prone, and creates yet another disconnected file that no one can easily find six months later.

Dotmatics products now natively write a Prism file directly from the ELN. One button sends your data across. Luma's Lab Connect system receives it, parses the proprietary Prism file format, breaks it into structured tables, recreates the graph images, and links the resulting dashboard back to the originating notebook experiment — all automatically, all traceable to the source experiment ID.

The same pattern works across chromatography, NMR, mass spec, and most major instrument types, regardless of vendor. A chromatography app, an NMR app, an x-ray diffraction app — regardless of who made the instrument, the data can all land in the same place and be shared directly with the notebook.

What a closed-loop screening workflow actually looks like

There's a different kind of frustration that shows up further down the workflow: the manual handoff between the ELN and a screening system.

Running a dose response experiment traditionally means someone manually dragging and dropping raw data files into the screening system — or, if they're lucky, IT has built a bespoke API integration to do it for them. Either way, there's human effort in the middle of a workflow that shouldn't require it.

With Luma, that handoff disappears. A scientist fills in the plate barcodes in the ELN and clicks request. Luma picks up those barcodes, collects the raw instrument data, matches it against the request, runs normalization, and pushes the processed results directly back into the screening tab in the ELN, ready for curve review.

No raw file handling. No parser selection. No waiting on IT. The scientist goes straight to analysis.

This isn't a narrow use case. Any lab running plate-based screening, automated assays, or instrument-triggered workflows can close that loop the same way. The manual steps in between don't need to exist.

The AI layer that actually shows its work

Agent Luma is woven throughout the platform, and it's worth being specific about what it does, because it's different from most AI implementations in scientific software.

The underlying principle is that Luma data is structured to begin with. That means the AI has less to infer and more to work with directly. It's a meaningful distinction from general-purpose AI tools that are essentially making educated guesses from unstructured text. Agent Luma is designed to be traceable, explainable, and verifiable — because science requires auditability in a way most industries don't.

In practice, that plays out in three distinct ways.

Natural language queries across complex datasets. A scientist can ask Agent Luma: "Compared to the best PK data we have in this app, how do the last two weeks look?" It surfaces the best PK compound, summarizes the last two weeks of results, flags clinical significance, and suggests the next round of experiments — in plain English, from a natural language question, against a multi-table dataset.

AI-generated experimental write-ups. Given the raw data from a chemistry experiment — reactants, products, stoichiometry, solvents — Agent Luma generates a first-pass write-up automatically. In one example, it identified that the reaction was a reductive amination, a fact that wasn't written anywhere in the ELN. It flagged a 43% yield as below what the conditions should achieve and suggested the solvent choice wasn't optimal. All from the data. No additional prompting.

App-building from scratch. Load chemistry screening files into Lab Connect, describe the objective, and Agent Luma inspects the files, builds the data model, writes the table structure, generates the raw data flows, and gets the application most of the way to production. Configuration work that would typically take weeks.

The value isn't just automation. It's that the AI is operating on your actual scientific data, not generating plausible-sounding content around gaps in it.

The integration question every lab asks

The most common concern when labs evaluate a new data platform is migration. Switching tools means moving data, retraining people, and usually a months-long implementation project nobody has the capacity for.

Luma is designed to sidestep that concern entirely. It connects via files, API, or direct database connection, and it's ELN-agnostic — the integrations work across vendor notebooks, not just Dotmatics. Out of the box, it connects to LabArchives, third-party screening systems, gPROMS for process modeling, SYNTHIA for retrosynthesis, LabDonkey for lab scheduling, Revvity Signals, and more. The open API means almost anything can be plugged in.

Nothing needs to change before you can get value from it. The data you already have, in the tools you already use, is the starting point.

What this means for your lab

The problems Luma addresses — static attachments nobody can search, instrument data living in silos, manual handoffs that slow everything down, experimental write-ups that take hours — are not edge cases. They show up in almost every R&D environment, at every scale.

What Luma makes clear is that these aren't fundamental limitations of how lab data works. They're integration problems. And integration problems are solvable.

Watch our on-demand webinar on this topic to learn more.