Data without context is useless. The question, the protocol, the decisions, the sequence: these are what give experimental results their meaning. And unfortunately that is precisely what’s missing from most current AI workflows. And without it, we’ll never see transformative agentic or autonomous science at scale.

How do we get there? We must radically redefine our approach, starting with how we think about some of the most basic aspects of the modern discovery cycle.

Redefining Lab Orchestration

The modern research lab is, by almost any measure, a marvel. Instruments run through the night. Robotic systems move samples with a precision no human hand could match. Scheduling software coordinates dozens of interdependent steps across multiple workstations, keeping experiments in motion with minimal intervention. Walk through a well-automated facility and you are watching years of engineering, investment, and ingenuity at work.

And yet, for all of that capability, something still breaks down. A result surfaces that no one can fully explain. A team tries to replicate a promising experiment and discovers that the original conditions were never fully captured. A research program scales up with more instruments, more data, more scientists, and the cracks that were invisible at smaller volumes begin to widen. The lab is running. The science is struggling to keep up.

This is the problem that lab orchestration is meant to solve. But to solve it well, we need to be precise about what the problem actually is.

The first definition, and why it falls short

Ask most automation professionals what lab orchestration means, and they will describe something like this: the coordination of instruments, robotics, and scheduling systems into a synchronized physical workflow. Samples move seamlessly from station to station. Equipment is utilized efficiently. Data is captured and passed downstream. The physical lab works as one.

This is real and valuable work. It is also, on its own, incomplete.

Think of an orchestra playing without a score. Every musician is in their seat. The timing is precise. The notes are technically correct. But without the score, without the record of what was intended, how each part relates to the others, what the piece is actually trying to express, you cannot fully understand what you just heard. You cannot reproduce it faithfully. You cannot build on it. What you have is sound, not music.

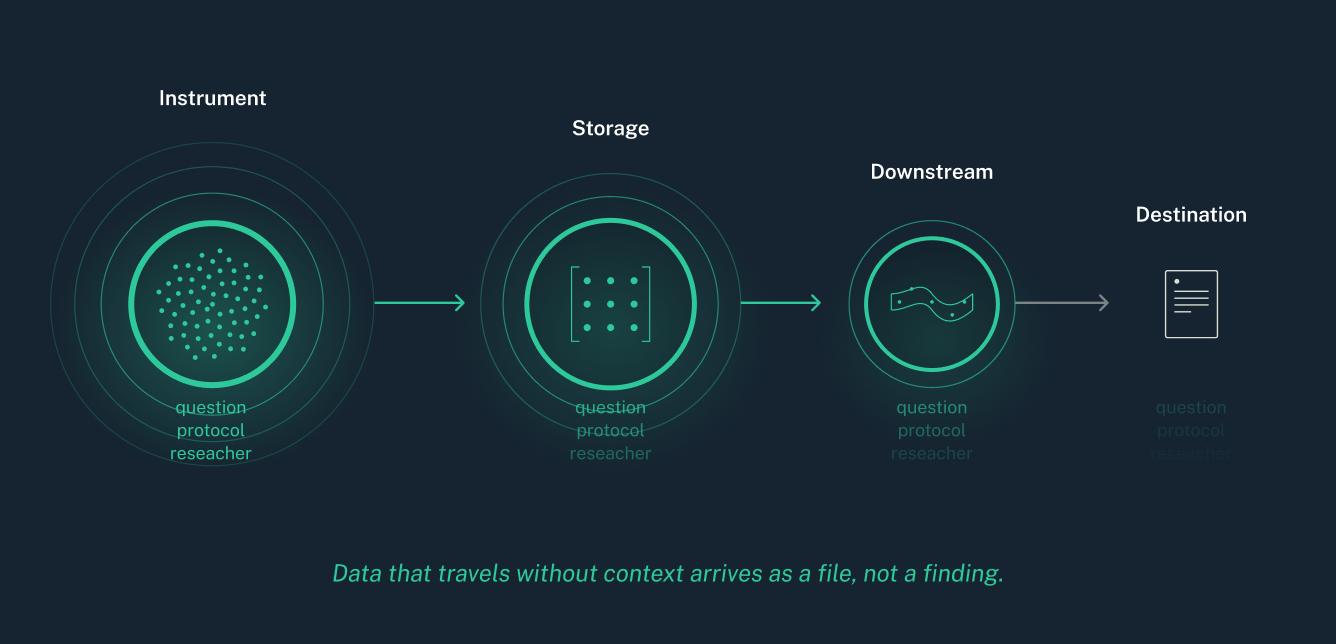

Data that flows out of a well-automated lab but lands without context is a version of the same problem. You have files. You may even have a great many of them. But files are not knowledge.

The layer that's missing: context, continuity, and scientific intent

Every experiment arrives in the world carrying more than its raw outputs. It carries the question it was designed to answer. The protocol that shaped its execution. The decisions made when something didn't go as expected. Its relationship to the experiments that preceded it and the ones that are meant to follow.

When data is captured without that context, stripped from its place in the scientific process as it moves between systems, something important is lost. Results become difficult to interpret without reconstructing the circumstances that produced them. Reproducing an experiment means relying on memory, email threads, or institutional knowledge that may no longer be available.

Scaling a workflow means scaling all of that fragility alongside it.

This is not a failure of automation. It is a failure of continuity. And it is where the prevailing definition of lab orchestration reaches its limit.

True lab orchestration does not just move data. It preserves the meaning of data as it moves, anchoring results to their place in a scientific workflow, maintaining the thread of intent from experiment design through execution through analysis and decision. It treats the experiment as the unit of value, not the file.

Why AI makes this urgent

For years, the cost of lost context was real but manageable. Teams absorbed the friction. They rebuilt what was missing. They made do.

That calculus has changed.

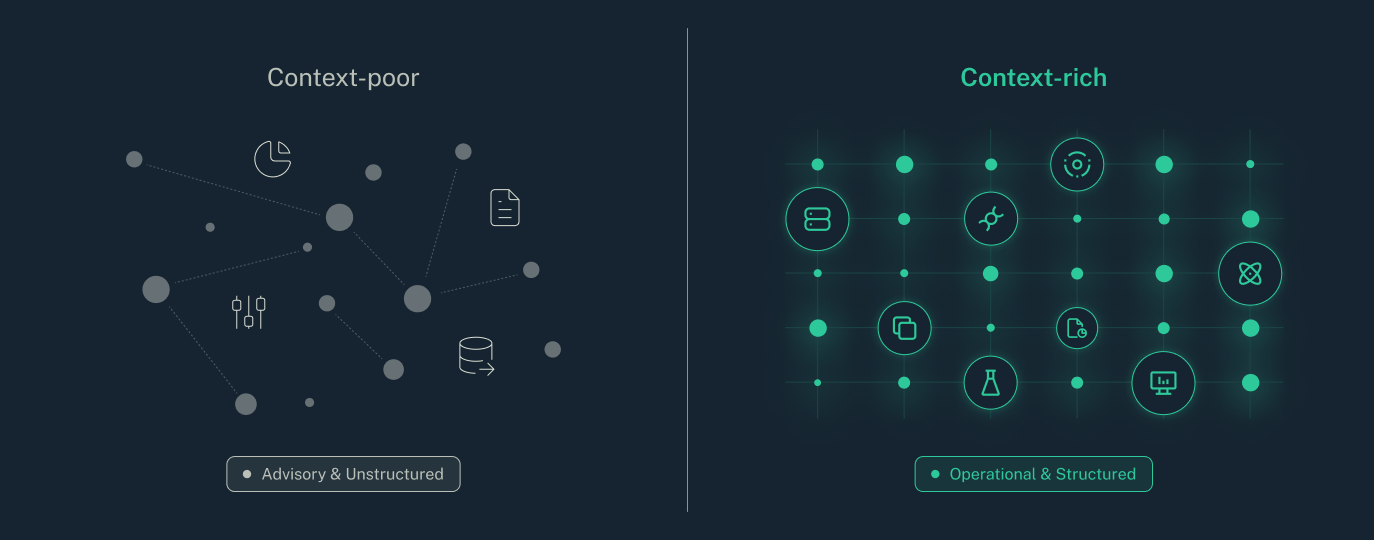

AI is arriving in the research lab with significant promise: the ability to identify patterns across large experimental datasets, to suggest the next meaningful direction, to accelerate the pace of iterative discovery. But that promise rests on a foundation that many labs have not yet built.

An AI system that can only see narrow slices of decontextualized data is not a discovery partner. It can summarize what is in front of it. It can find correlations in the numbers. What it cannot do, what no AI system can do, is reason meaningfully about whether a research program is moving in the right direction when it lacks the context of where that program has been, what was tried, what was learned, and why the current experiment was designed the way it was.

The vision most scientific organizations have for AI, including intelligent guidance on next steps, closed-loop experimentation, analysis that spans entire research programs rather than individual assays, depends entirely on data that is grounded in its scientific context. AI needs the whole picture. Orchestration is how you give it one.

What lab orchestration actually requires

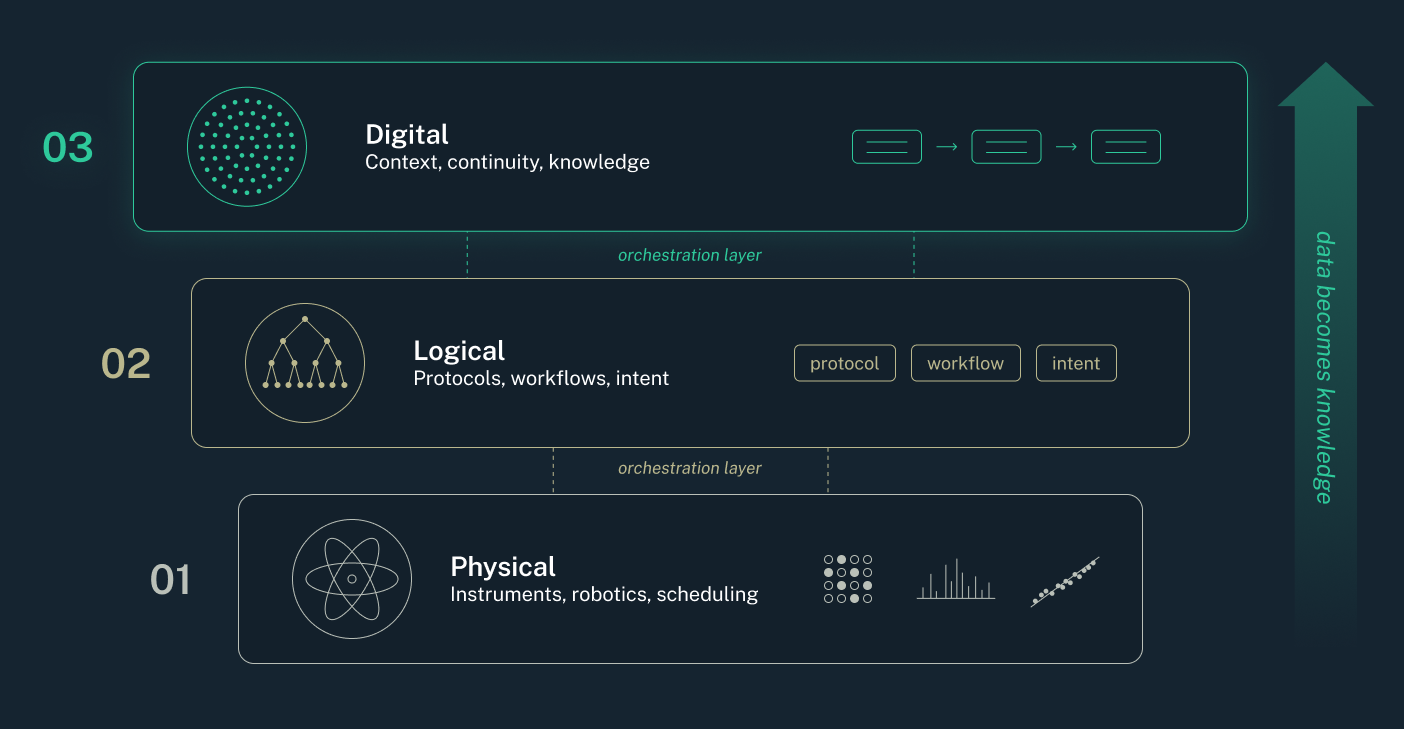

A complete definition of lab orchestration has to operate across three domains at once.

The physical domain is where the science happens: instruments generating data, robotic systems executing workflows, scheduling platforms coordinating the movement of samples and the sequencing of tasks. This layer is well-served by dedicated automation and scheduling tools, platforms purpose-built to control equipment, manage queues, and keep physical operations running smoothly.

The logical domain is the structure that gives physical work its meaning: experimental protocols, workflow design, business rules, the sequence of decisions that shape how a study is run. This is where scientific intent lives before it becomes action.

The digital domain is where data must land to become knowledge: systems that capture experimental outputs alongside their context, connect results to the workflows that produced them, and maintain continuity across the full arc of a research program — across teams, across time, and across the inevitable evolution of tools and methods.

All three have to work together. Physical automation without digital continuity produces data that cannot be trusted at scale. Digital systems without physical connectivity are disconnected from the reality of what actually happened at the bench. The logical layer, the scientific process itself, is what gives the whole system its coherence. True orchestration is the connective tissue between all three. And the weakest link, in most labs today, is the connection between the physical and the digital: not the movement of files, but the preservation of meaning as data crosses that boundary.

Dotmatics Luma: Building the foundation that discovery requires

The labs that will lead the next decade of scientific discovery are not simply the most automated. They are the ones building digital foundations capable of sustaining scientific knowledge over time: systems where experimental intent, execution data, and decision context remain connected as research scales, as teams change, and as AI becomes a genuine partner in the work.

That foundation requires more than ingestion. It requires orchestration in the fullest sense: a digital layer that understands the structure of scientific work, not just the shape of data files.

Dotmatics approaches this through Luma, a scientific intelligence platform designed to receive data from the physical lab, however complex that environment may be, and preserve its scientific context as it enters the digital domain. Luma connects with the scheduling and automation platforms that manage physical execution, and ensures that what flows upstream is not just data, but data that knows where it came from, what it belongs to, and what it is meant to inform.

Luma is a composable, low-code platform that works seamlessly with Luma Lab Connect, a lab integration software that lets teams unlock the full value of your scientific instrument data with automated ingestion, scientific data modeling and cutting-edge data management.